Researchers Incorporate Computer Vision, Uncertainty into AI for Robotic Prosthetics

Lobaton’s lab leverages AI to create a software integration for existing hardware to enable people using robotic prosthetics to walk in a safer, more natural manner on different types of terrain.

May 28, 2020 ![]() Staff

Staff

Researchers have developed new software that can be integrated with existing hardware to enable people using robotic prosthetics or exoskeletons to walk in a safer, more natural manner on different types of terrain. The new framework incorporates computer vision into prosthetic leg control, and includes robust artificial intelligence (AI) algorithms that allow the software to better account for uncertainty.

“Lower-limb robotic prosthetics need to execute different behaviors based on the terrain users are walking on,” says Edgar Lobaton, co-author of a paper on the work and an associate professor of electrical and computer engineering at North Carolina State University. “The framework we’ve created allows the AI in robotic prostheses to predict the type of terrain users will be stepping on, quantify the uncertainties associated with that prediction, and then incorporate that uncertainty into its decision-making.”

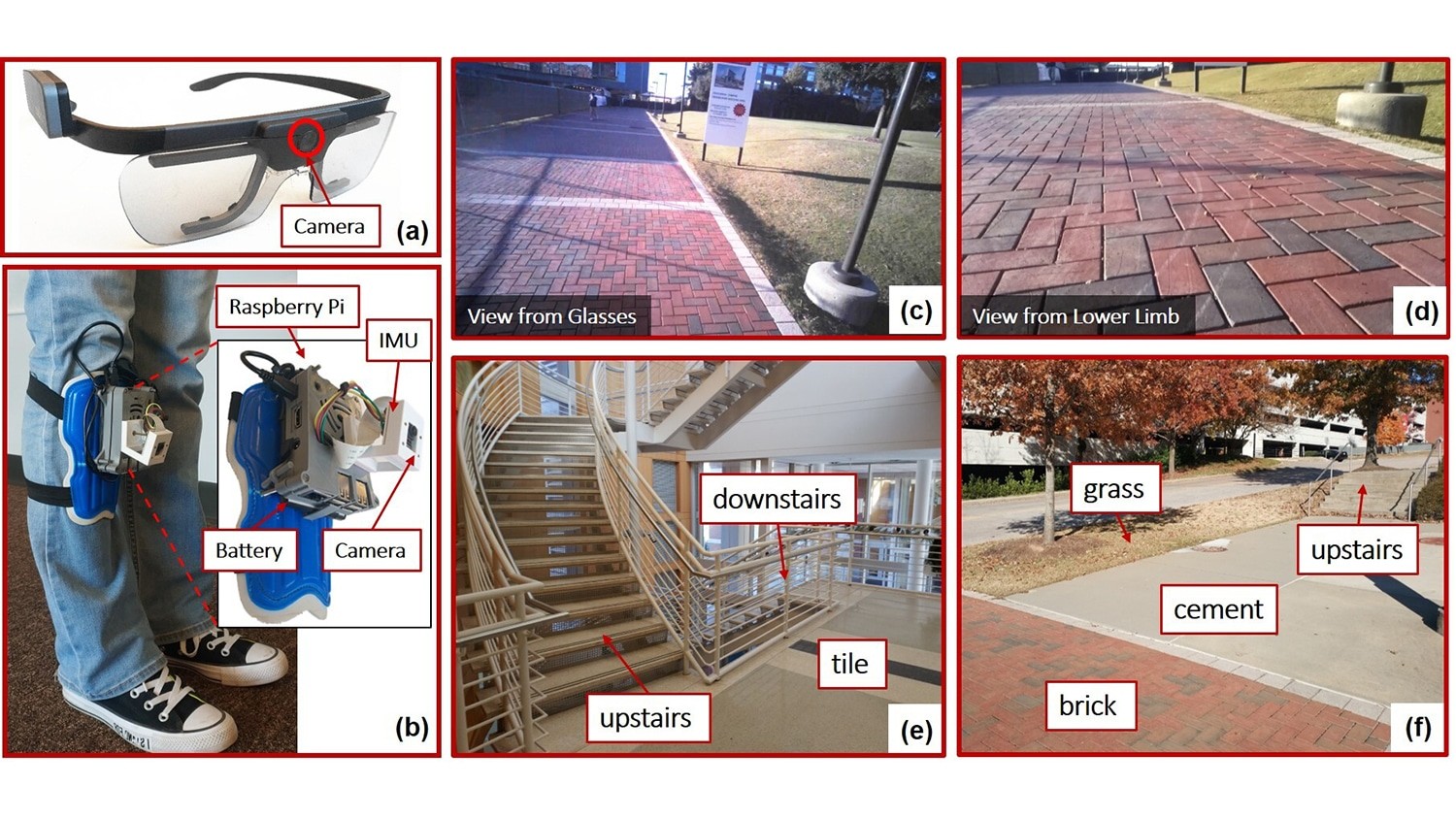

The researchers focused on distinguishing between six different terrains that require adjustments in a robotic prosthetic’s behavior: tile, brick, concrete, grass, “upstairs” and “downstairs.”

“If the degree of uncertainty is too high, the AI isn’t forced to make a questionable decision – it could instead notify the user that it doesn’t have enough confidence in its prediction to act, or it could default to a ‘safe’ mode,” says Boxuan Zhong, lead author of the paper and a recent Ph.D. graduate from NC State.

The new “environmental context” framework incorporates both hardware and software elements. The researchers designed the framework for use with any lower-limb robotic exoskeleton or robotic prosthetic device, but with one additional piece of hardware: a camera. In their study, the researchers used cameras worn on eyeglasses and cameras mounted on the lower-limb prosthesis itself. The researchers evaluated how the AI was able to make use of computer vision data from both types of camera, separately and when used together.

“Incorporating computer vision into control software for wearable robotics is an exciting new area of research,” says Helen Huang, a co-author of the paper. “We found that using both cameras worked well, but required a great deal of computing power and may be cost prohibitive. However, we also found that using only the camera mounted on the lower limb worked pretty well – particularly for near-term predictions, such as what the terrain would be like for the next step or two.” Huang is the Jackson Family Distinguished Professor of Biomedical Engineering in the Joint Department of Biomedical Engineering at NC State and the University of North Carolina at Chapel Hill.

The most significant advance, however, is to the AI itself.

“We came up with a better way to teach deep-learning systems how to evaluate and quantify uncertainty in a way that allows the system to incorporate uncertainty into its decision making,” Lobaton says. “This is certainly relevant for robotic prosthetics, but our work here could be applied to any type of deep-learning system.”

To train the AI system, researchers connected the cameras to able-bodied individuals, who then walked through a variety of indoor and outdoor environments. The researchers then did a proof-of-concept evaluation by having a person with lower-limb amputation wear the cameras while traversing the same environments.

“We found that the model can be appropriately transferred so the system can operate with subjects from different populations,” Lobaton says. “That means that the AI worked well even thought it was trained by one group of people and used by somebody different.”

However, the new framework has not yet been tested in a robotic device.

“We are excited to incorporate the framework into the control system for working robotic prosthetics – that’s the next step,” Huang says.

“And we’re also planning to work on ways to make the system more efficient, in terms of requiring less visual data input and less data processing,” says Zhong.

The paper, “Environmental Context Prediction for Lower Limb Prostheses with Uncertainty Quantification,” is published in IEEE Transactions on Automation Science and Engineering. The paper was co-authored by Rafael da Silva, a Ph.D. student at NC State; and Minhan Li, a Ph.D. student in the Joint Department of Biomedical Engineering.

The work was done with support from the National Science Foundation under grants 1552828, 1563454 and 1926998.

-shipman-

Note to Editors: The study abstract follows.

“Environmental Context Prediction for Lower Limb Prostheses with Uncertainty Quantification”

Authors: Boxuan Zhong, Rafael L. da Silva and Edgar Lobaton, North Carolina State University; Minhan Li and He (Helen) Huang, the Joint Department of Biomedical Engineering at North Carolina State University and the University of North Carolina at Chapel Hill

Published: May 22, IEEE Transactions on Automation Science and Engineering

DOI: 10.1109/TASE.2020.2993399

Abstract: Reliable environmental context prediction is critical for wearable robots (e.g. prostheses and exoskeletons) to assist terrain-adaptive locomotion. In this paper, we proposed a novel vision-based context prediction framework for lower limb prostheses to simultaneously predict human’s environmental context for multiple forecast windows. By leveraging Bayesian Neural Networks (BNN), our framework can quantify the uncertainty caused by different factors (e.g. observation noise, and insufficient or biased training) and produce a calibrated predicted probability for online decision making. We compared two wearable camera locations (a pair of glasses and a lower limb device), independently and conjointly. We utilized the calibrated predicted probability for online decision making and fusion. We demonstrated how to interpret deep neural networks with uncertainty measures and how to improve the algorithms based on the uncertainty analysis. The inference time of our framework on a portable embedded system was less than 80ms per frame. The results in this study may lead to novel context recognition strategies in reliable decision making, efficient sensor fusion and improved intelligent system design in various applications. Note to Practitioners—This paper was motivated by two practical problems in computer vision for wearable robots: (1) Performance of deep neural networks is challenged by real-life disturbances. However, reliable confidence estimation is usually unavailable and the factors causing failures are hard to identify. (2) Evaluating wearable robots by intuitive trial and error is expensive due to the need of human experiments. Our framework produces a calibrated predicted probability as well as three uncertainty measures. The calibrated probability makes it easy to customize the prediction decision criteria by considering how much the corresponding application can tolerate error. In this study, we demonstrated a practical procedure to interpret and improve the performance of deep neural networks with uncertainty quantification. We anticipate that our methodology could be extended to other applications as a general scientific and efficient procedure of evaluating and improving intelligent systems.